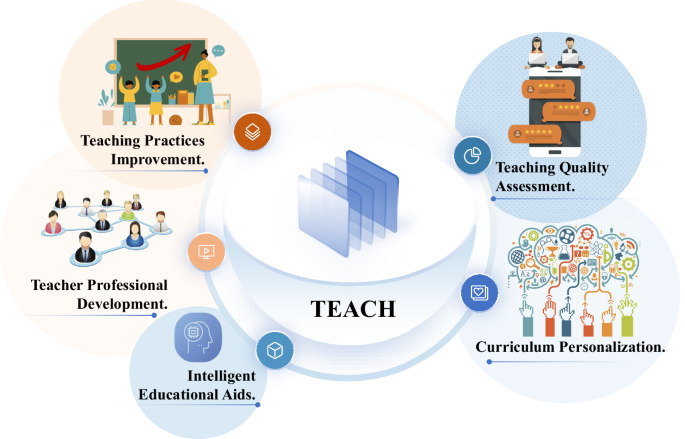

A Multi-Modal Dataset for Teacher Behavior Analysis in Offline Classrooms

In this section, we present the three sub-datasets that form the MM-TBA dataset. The teaching action detection sub-dataset focuses on identifying teacher actions within teaching videos. The teacher lecture evaluation sub-dataset provides a comprehensive, multi-dimensional assessment of lecture content. The instructional design sub-dataset employs multivariate analysis methods to annotate instructional designs. Figure 2 illustrates the overall framework for constructing the MM-TBA dataset.

The overall framework of constructing the MM-TBA dataset.

Teaching Action Detection Sub-Dataset

The teaching action detection sub-dataset focuses specifically on the hand movements of teachers, categorizing these actions for every second of the teaching videos. Specifically, we defined six action categories: “pointing”, “beat gesture”, “descriptive gesture”, “writing”, “interacting”, and “neutral”. Given the complexity of developing this sub-dataset, we detail the process through three key aspects: data collection, data preprocessing, and data annotation.

Data Collection

We captured films of the trainee teachers instructing in micro-classrooms in 2018-2021. The shooting angles include 0∘ and 30∘ directions facing the teacher’s blackboard. We obtained the instructors’ informed consent prior to filming and informed them that the recordings would be used for future teaching and research purposes, and others. Additionally, we have anonymized the data contained in the dataset, all in an effort to preserve their privacy and the security of their information. We ultimately produced a total of 4,839 teaching videos.

The trainee teachers involved in this study are pre-service graduates from teacher education programs. Prior to their internships, they completed comprehensive coursework in educational theory and pedagogical methods, supplemented by school-based training. Under the guidance and supervision of experienced mentors, they applied these theories and methods in practical settings. Consequently, these teachers acquired practical teaching experience, contributing to the authenticity and representativeness of the collected teaching data.

Data collected at the beginning or end of the semester may not fully represent instructional practices throughout the entire term, and teaching styles can differ significantly by subject and instructor. To minimize these biases, we ensured data diversity by selecting courses from different points within the academic term and across multiple subjects, including Mathematics and Information Technology, spanning middle and high school levels. The gender distribution of teachers is approximately 2:3. This approach ensures that our dataset is reflective of typical classroom environments, thereby enhancing the generalizability of the findings. We present the attributes distribution of the collected data in Fig. 3, and have uploaded the metadata file to the data repository.

The attributes distribution of the MM-TBA.

Data Preprocessing

Given the unique teaching style of each teacher16, we meticulously screened all the videos to ensure quality and relevance. Videos were excluded if they contained excessive repetitive movements, which we defined as a teacher repeating the same gesture or action more than six times within a 10-second window. We also removed segments showing irregular teaching behaviors, such as the teacher facing away from students for over 10 seconds or leaving the podium more than twice per minute. Similarly, segments with no teaching-related actions for over 10 consecutive seconds were discarded. To keep the focus on the teacher, we excluded segments where non-teacher individuals (e.g., students or assistants) blocked the teacher’s view for more than 20% of the duration. These criteria ensured the dataset contained meaningful instructional content.

As a result, 354 videos of approximately 300 trainee teachers with rich movements and standardized teaching behaviors were retained. We removed the opening and closing minutes of each teaching video based on considerations of their irrelevance to the classroom content. Subsequently, we used FFmpeg17 (video editing tool) to crop all the videos into approximately 10-second video clips and performed a second round of filtering. We ultimately obtained 3,173 video clips with a total duration of approximately 32,000 seconds. To safeguard participants’ personal information and privacy, and to mitigate data sensitivity, visual feature extraction was performed on the data, which was subsequently stored as pkl files. The data was then divided into training, test sets in an 8:2 ratio.

Data Annotation

Initially, we utilized FFmpeg to extract frames from all video clips at 30 frames per second, sampling one frame per second. Building on the success of the YOLO series in object detection, we employed the YOLO18 to identify bounding boxes around teachers in the videos. Bounding boxes are utilized to locate the spatial position of the teacher within the video, represented by two pairs of (x, y) coordinates. For annotating teaching actions, we used the VIA tool (version 3.0.11)19,20, configured with a timeline precision of 1 frame per second to enable accurate temporal segmentation. Trained annotators classified the teacher’s actions within these bounding boxes into six predefined categories. This two-step workflow—automated bounding box detection followed by manual action classification—ensured both efficiency and accuracy in the annotation process.

In the field of education, teachers’ gestures in the classroom are typically categorized into three types: pointing, beat, and descriptive gesture21,22. Pointing gestures refer to the intentional physical movements made by teachers to point to specific objects during instruction, thereby capturing students’ attention and highlighting the significance of visual information. Beat gestures involve repetitive hand movements between the waist and chin, which help teachers control the cadence and pace of their speech while emphasizing key points. Descriptive gestures refer to depicting various aspects of the semantic content of speech through hand shapes or hand movement trajectories, evoking learners’ psychological schema of this semantic content visually or metaphorically23. Our work expands on these categories by introducing three additional actions: “writing”, “interacting”, and “neutral”13,24. The action of “writing” refers to the act of a teacher writing on a blackboard or electronic screen. The action of “interacting” signifies the teacher’s invitation for students to answer questions, thereby fostering classroom engagement. The action termed “neutral” describes the teacher’s ordinary movements, such as hands resting naturally at their sides. Figure 4 presents examples of six types of teaching actions.

Examples of teaching action.

Four undergraduate students with computer science and education backgrounds, along with basic knowledge of deep learning, conducted the data annotation. Their multidisciplinary background allowed them to grasp the nuances of teaching actions and the importance of high-quality data for model training. Before officially starting the annotation work, the annotators underwent specialized training. The training content included the following: use of annotation tools, annotation criteria and guidelines, and practical exercises. We regularly reviewed annotation results and provided feedback based on initial model training outcomes, ensuring annotators consistently improved their work. This rigorous training enabled them to efficiently and accurately complete tasks while understanding the critical role of these annotations in deep learning model training, providing a strong foundation for effective training. To ensure quality and minimize subjectivity, each data record was annotated by at least two independent annotators. The final temporal label was established through an additional verification and refinement process, based on the two initial annotations. Additionally, we employed DeepSort25 for tracking and locating teachers, ultimately generating annotation files in the AVA10 dataset format. Figure 5 shows the specific sample counts for each category within the teaching action detection sub-dataset. Our dataset format is compatible with a wide range of video action recognition models.

The specific sample counts for each action category within the teaching action detection sub-dataset.

Teacher Lecture Evaluation Sub-Dataset

The teacher lecture evaluation sub-dataset aims to provide a comprehensive, multi-dimensional assessment of teacher lectures. This sub-dataset allows for the more fine-grained and adaptable evaluation of teaching quality using advanced natural language processing techniques. Specifically, we employed MoviePy26 (a Python library for video editing) to extract audio from the teaching videos, which underwent speech-to-text processing to transcribe the teachers’ lectures. The generated text was then manually verified and corrected, with each transcript averaging approximately 2,220 words. Furthermore, multiple filters were applied to remove sensitive information contained within the lecture content. In recent years, large language models have demonstrated remarkable performance in text generation capabilities. To assess teachers’ lectures, we designed a set of teacher lecture evaluation criteria and prompt templates tailored to the specific characteristics of pedagogy27,28. Figure 6 shows the template for generating fine-tuning data, while Fig. 7 displays the template for creating the final evaluation report. Using these templates, we generated evaluation reports on teachers’ lectures by calling the GPT-3.5 API29. These reports served as training data to fine-tune the Baichuan230 model. In the field of artificial intelligence, fine-tuning refers to the process of adapting a pre-trained model by making incremental adjustments to its parameters to tailor it to a specific task or dataset. This method utilizes the general knowledge acquired during pre-training, allowing the model to efficiently adapt and achieve optimal performance on a new, often smaller, target dataset31.

Prompt template for generating fine-tuning data.

Prompt template for generating final evaluation report.

LoRA (Low-Rank Adaptation)32 is a fine-tuning technique that reduces the number of trainable parameters by introducing low-rank matrices, thereby making the process more efficient while retaining model performance. Inspired by LoRA, we utilized it to enhance the fine-tuning efficiency of large language models. During the fine-tuning process, we utilized the Adam optimizer with specific hyperparameters to enhance the optimization process. We set the “beta1” parameter to 0.9 and the “beta2” parameter to 0.98. Additionally, we configured the epsilon parameter to 1e-8. We optimize the model with a learning rate of 2e-5 and set the training epochs to 10. Upon completing the fine-tuning of the large language model, we obtain a newly optimized weight file.

Subsequently, we proposed a method that synergizes a large language model and subject-specific knowledge graphs to generate more accurate teacher lecture evaluation reports. To enhance lecture evaluation, we constructed knowledge graphs representing subject-specific concepts and their relationships using school mathematics and IT textbooks aligned with national curriculum standards. In these graphs, nodes represent key knowledge points (e.g., “quadratic functions”), while edges denote relationships such as “contains” (e.g., “quadratic functions” includes “parabolas”) or “prerequisite” (e.g., “linear equations” precedes “systems of equations”). We populated the graph by manually extracting concepts from textbooks and linking them based on pedagogical dependencies. During evaluation, we processed lecture transcripts using named entity recognition to identify mentioned knowledge points, mapping them to the graph to assess coverage and coherence. This structured representation enabled us to extract relevant knowledge points from the teacher’s lecture and incorporate them into an automatically generated evaluation report (as shown in Fig. 2(b)). The automatically generated evaluation report assesses the teacher’s lecture across four dimensions: teaching phases33,34, teaching objectives and content35, teaching process and methods36, and teacher-student interaction37. Table 2 presents examples of the teacher lecture evaluation criteria across two dimensions. In addition to detailed descriptions and associated scores for each dimension, the report includes an overall evaluation and recommendations for improving instruction. Using the fine-tuned large language model, we generate a more fine-grained and adaptable teacher lecture evaluation report by following the specified prompt template.

Instructional Design Sub-Dataset

The instructional design sub-dataset aims to systematically annotate and analyze the instructional designs created by teachers. During the practicum of trainee teachers, we also gathered instructional designs that matched the course material from trainee teachers. Instructional design is a systematic activity guided by educational theory, involving the organization of instructional content and resources, the design of learning activities and environments based on learner characteristics, and ultimately supporting learners in achieving their learning objectives effectively38. In our work, we manually screened all collected instructional designs, retaining only those with complete teaching processes. Drawing on experience with multivariate analysis methods that combine instructional events and time sampling15, we introduced a comprehensive approach incorporating instructional designs, teaching videos, and teacher lecture transcripts to annotate the teaching processes within the instructional designs(as shown in Fig. 2(c)). Each annotated instance represents a specific teaching process within the instructional design, corresponding to a particular segment of the teaching video and the associated lecture transcript. Annotating instructional designs enables teachers to pinpoint issues and shortcomings within their teaching methods and activity designs, allowing them to optimize their teaching schedules and place greater emphasis on key instructional points. This process enhances the scientific rigor and effectiveness of instructional design while providing teachers with essential insights for improving their teaching practices.

Data Privacy and Consent

During the construction of the MM-TBA dataset, we adhered strictly to ethical guidelines to ensure the legality of the research data and the full protection of participants’ rights. Prior to data collection, informed consent and authorization were obtained from participants via a public education platform, explicitly agreeing to the recording and use of their teaching videos. Participants fully understood and accepted the platform’s terms before uploading their data, acknowledging that their data would be used for teaching analysis and scientific research. Additionally, we ensured that all other individuals featured in the videos were informed and had consented to their inclusion. In accordance with ethical review procedures, our research and data sharing have received ethical approval from the affiliated institution. The IRB number is ZSRT2025141.

All collected data is processed in accordance with applicable data protection regulations to ensure the security of participants’ personal information and privacy. To maintain data privacy and security, we masked faces in the teaching videos for action detection purposes. We do not directly provide videos and images of classroom instruction. The teaching actions of the trainee teachers were visually encoded and saved as feature files to anonymize the participants’ information. Faces in the images included in the paper were also obscured to safeguard privacy. For the lecture content and instructional design data, we employed a multi-round filtering process involving multiple reviewers to carefully screen and anonymize potentially sensitive information, ensuring that individual identities cannot be tracked. These anonymization steps do not compromise the usability of the dataset. We ensure that the dataset remains publicly accessible and reusable while adhering to privacy standards.

link